ChatGPT crossed 100 million users in January 2023, and within weeks, career advice forums on Reddit filled with a new kind of post: people sharing before-and-after screenshots of resumes rewritten by the tool. The transformations looked impressive. Vague bullet points became tight, metrics-laden accomplishment statements. Clunky summaries turned into polished professional profiles. And the praise was nearly universal: “Why didn’t I do this years ago?”

What nobody could see yet was the pattern forming on the other side of the hiring table.

The First Wave of AI-Polished Resumes

Through late 2023 and into 2024, AI resume rewriting became standard practice. Tools like ChatGPT, Claude, and Gemini made it trivially easy to paste in a rough draft and get back something that sounded like a senior executive wrote it. If you’re curious about how those three tools actually compare in practice, the differences are real, but the shared tendency toward polish is consistent across all of them.

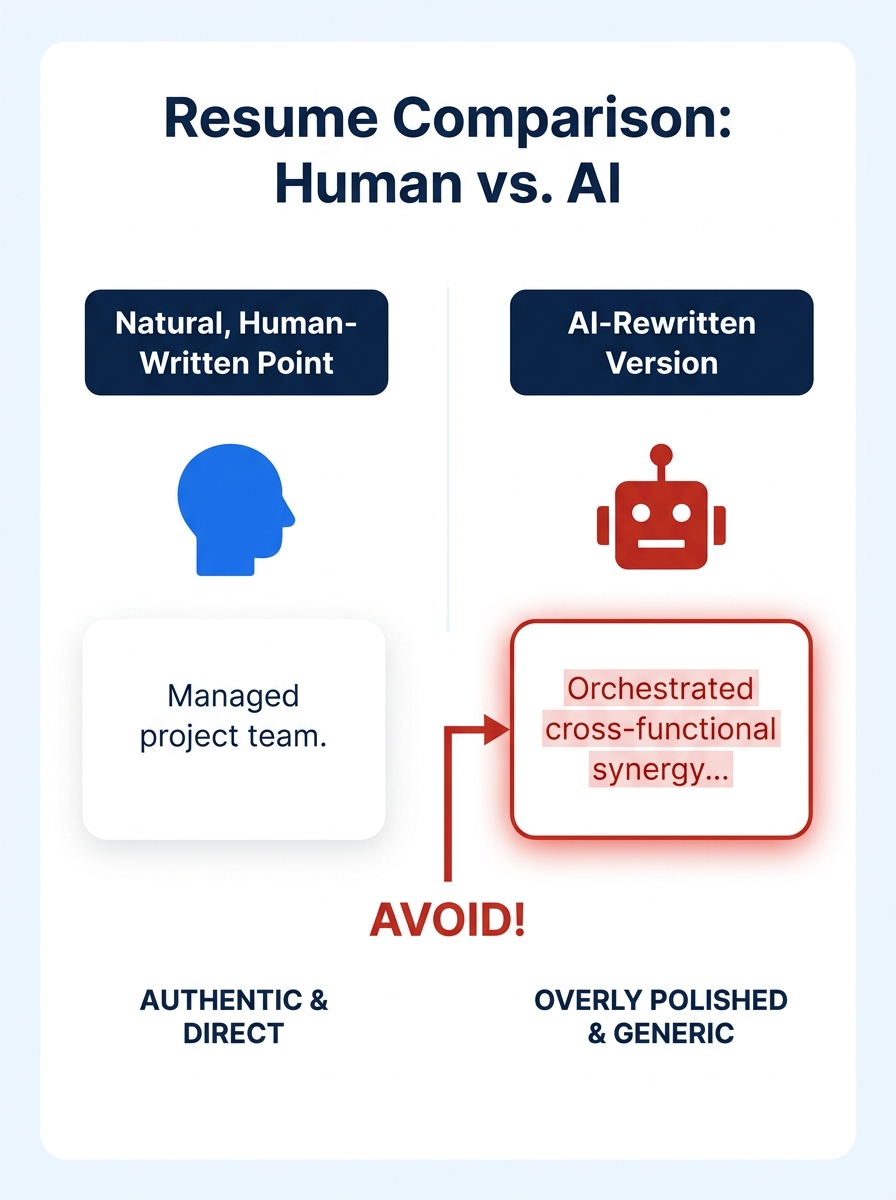

The appeal was obvious. A project manager who wrote “helped coordinate team schedules” could feed that into an AI and receive “orchestrated cross-functional resource allocation across 14-person project teams, reducing delivery timelines by 22%.” The before version undersold the work. The after version sounded like a Fortune 500 annual report.

And here’s where the trouble started: that 22% figure? Sometimes the candidate had no idea where it came from. The AI inferred it, hallucinated it, or extrapolated from a vague prompt. The language felt foreign because it was foreign. It belonged to the model, not the person.

Professional resume writers have long dealt with this tension. As TopResume’s guidance to clients notes, a professionally written first draft often “includes language that seems foreign to you,” and the writer must carefully strike a balance between conveying authenticity and meeting resume conventions. The difference is that a human writer collaborates over multiple rounds, asking questions, verifying claims, adjusting tone. An AI tool does all of that in eight seconds with zero verification.

Hiring Managers Started Reading Between the Lines

By mid-2024, recruiters and hiring managers were comparing notes. The resumes crossing their desks had started to sound eerily similar. The same superlatives. The same sentence structures. The same vocabulary choices that no actual human defaults to in conversation.

One Forbes contributor on a human resources council put it bluntly: “I appreciate résumés that feel authentic, where someone has taken the time to tell their unique story, not just say what I want to hear.” That sentiment became a recurring theme. Hiring managers don’t evaluate resumes in isolation. They read dozens, sometimes hundreds, for a single role. When thirty of those resumes describe “driving cross-functional synergies to optimize stakeholder engagement,” the language stops communicating competence and starts communicating copy-paste.

The resume personal branding mismatch became glaringly visible during interviews. A candidate whose resume described them as someone who “architected end-to-end digital transformation strategies” would show up and speak in plain, direct sentences about their actual work. The gap between the document and the person created doubt. Hiring managers started asking themselves: did this person write this, or did a machine? And if a machine did, what else might not be accurate?

When thirty resumes describe “driving cross-functional synergies to optimize stakeholder engagement,” the language stops communicating competence and starts communicating copy-paste.

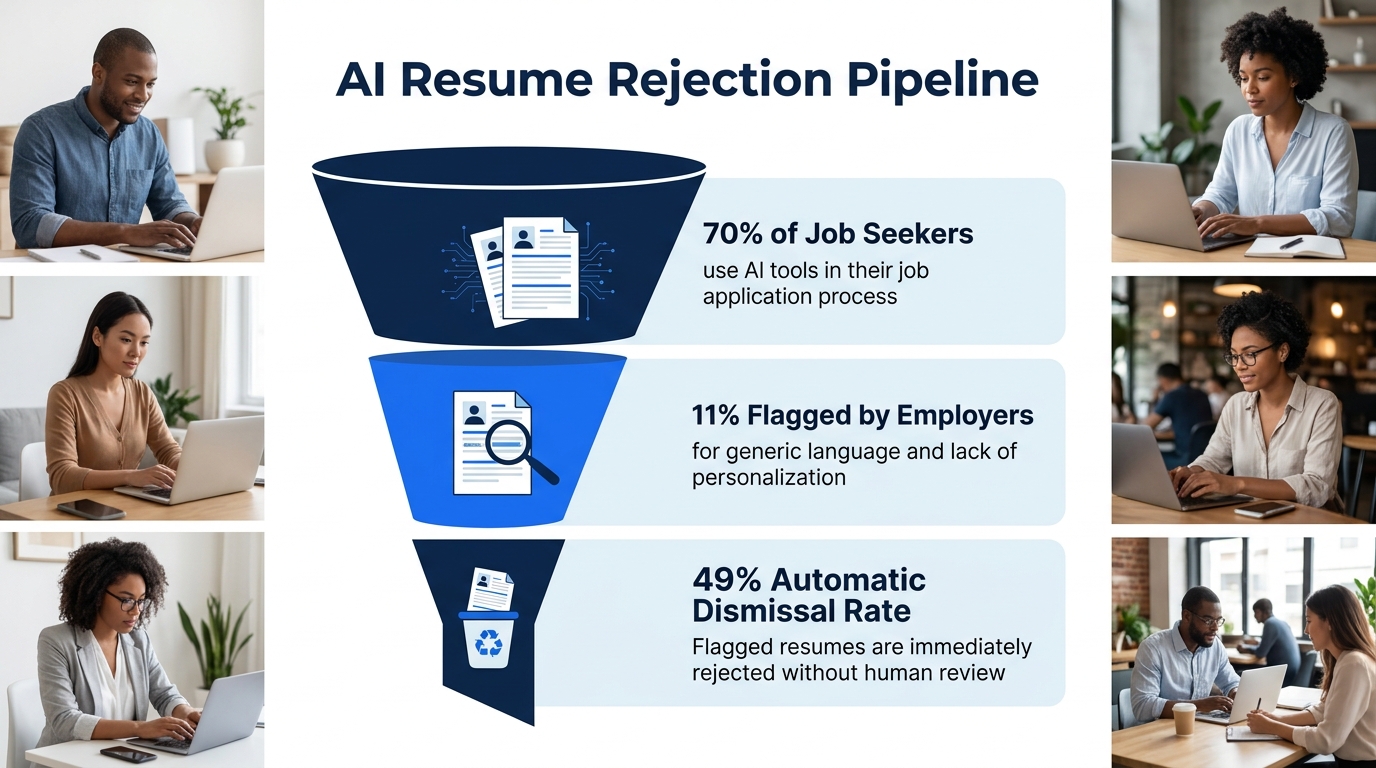

This concern about AI resume authenticity grew fast. According to ResumeGeni’s 2026 employer detection report, approximately 70% of job seekers now use AI tools in their resume creation process. When the majority of candidates use the same tools, the output converges. Employers began flagging resumes that relied on generic superlatives without concrete evidence of professional performance. Specific, quantifiable achievements with precise metrics became the signal that a human had actually written (or at least verified) the content.

When Identical Language Became a Red Flag

The detection conversation shifted during 2025 from hypothetical to operational. Companies didn’t need sophisticated AI detection software. The tells were visible to anyone reading carefully.

Here’s what kept showing up:

- Inflated scope language. Candidates at coordinator-level positions describing themselves as having “spearheaded enterprise-wide initiatives.” (When you’re two years into your career, nobody believes you were running enterprise-wide anything.)

- Metric fabrication. Precise-sounding numbers with no grounding. “Increased revenue by 34.7%” from someone who had no access to revenue data in their role.

- Vocabulary mismatches. A customer service representative whose resume used words like “operationalized” and “synergistic” but who, in the phone screen, spoke naturally and directly about helping frustrated customers.

- Identical phrasing across candidates. When a hiring manager sees the exact phrase “results-oriented professional with a proven track record of delivering impactful solutions” on three resumes in one afternoon, the phrase becomes meaningless.

The data backs up the consequences. A Dice.com survey of hiring managers found that nearly 49% of AI-generated resumes are automatically dismissed. Not flagged for review. Dismissed. That’s a coin flip’s chance of your resume hitting the trash before a human even evaluates your qualifications.

Reddit’s resume communities have documented the downstream anxiety this creates. Job seekers who wrote their own resumes from scratch began running them through AI detectors and panicking when the tools flagged their writing as machine-generated. One poster described rewriting their resume multiple times, running it through GPTZero after each pass, trying to get a “human” score. The irony is thick: real humans writing real resumes, contorting their language to prove they’re not robots.

If your resume is already triggering red flags you can’t identify, the AI polish you added might be the culprit rather than the cure.

The Real Cost of Losing Your Voice

The AI rewriting risks go deeper than detection. The real damage is to your personal brand, the thing that’s supposed to differentiate you from every other qualified candidate.

Hiring managers are very interested in knowing who you are as a person: your work ethic, your attitude, your work style, and whether you’ll fit into the workplace culture. That insight from WorkItDaily captures something the AI resume conversation often misses. A resume doesn’t exist to prove you can list accomplishments. It exists to give a hiring manager a reason to want to talk to you. And that reason is almost always tied to something specific and human.

Consider two summary statements for the same product designer:

AI-polished version: “Dynamic and innovative product designer with 8+ years of experience driving user-centric design solutions across enterprise SaaS platforms, consistently delivering measurable improvements in user engagement and retention metrics.”

Voice-preserved version: “Product designer, 8 years, mostly B2B SaaS. I’m the person teams pull in when a feature has strong usage data but terrible satisfaction scores. My best work happens in the gap between what users do and what they actually want.”

The second version tells you something about how this person thinks, what they value, and what kind of work energizes them. The first version could describe any product designer on earth. That gap between a generic, polished document and an authentic representation of who you are is the resume personal branding mismatch that costs interviews.

Harvard’s career services center makes this point with characteristic brevity: avoid flowery language. Keep things concise and factual. The best resumes have always worked this way. AI tools, trained to produce impressive-sounding text, push in the opposite direction.

And the mismatch compounds when you reach the interview stage. We’ve covered why storytelling outperforms credential lists in first-question interview scenarios, and the principle applies directly here. If your resume tells one story and your mouth tells another, the interviewer notices. They might not call it out, but the dissonance registers. Trust erodes before you’ve finished your first answer.

The Recalibration That’s Happening Now

Smart job seekers have started treating AI differently. The shift began in earnest around early 2026, and it looks less like abandoning AI and more like reassigning its role.

The approach that’s working treats AI as an editor for structure and format, not as a ghostwriter for voice. You write the first draft. You use your own words, your own descriptions of what you actually did, your own way of explaining complex work. Then you bring in AI for specific, bounded tasks:

- Checking for passive voice and suggesting active alternatives (a persistent weakness in self-written resume bullets)

- Identifying places where you could add a metric you already know but forgot to include

- Reformatting inconsistent bullet points so they follow a parallel structure

- Flagging jargon that won’t translate outside your current company

The key distinction is that the AI is cleaning up your language rather than replacing it. When AI generates the content from scratch, it produces what it thinks a resume should sound like, based on thousands of existing resumes in its training data. That’s how you end up sounding like everybody else.

Tip: Before you paste your resume into any AI tool, write down the three things you most want a hiring manager to remember about you. After the AI edits, check whether those three things still come through clearly in your own voice. If they don’t, the tool removed the wrong parts.

The broader conversation about when AI rewriting helps versus hurts has matured considerably. The consensus among career coaches and hiring professionals is converging: AI is good at mechanics and bad at meaning. Use it for the former, protect the latter.

Where the Authenticity Gap Stands Today

The paradox of AI resume tools in 2026 is that they’ve made it easier than ever to produce a resume that looks right while making it harder to produce one that feels right. Hiring manager expectations have shifted accordingly. They’re reading for signals of a real person behind the document, and the absence of those signals has become its own form of disqualification.

The 49% automatic dismissal rate for suspected AI resumes isn’t going to shrink. If anything, as detection methods improve and as hiring managers develop better instincts for spotting AI-polished language, that number will climb. The candidates who stand out will be the ones whose resumes sound like a specific human being with specific experiences and a specific way of talking about their work.

Your resume doesn’t need to be perfect. It needs to be yours. The rough edges, the slightly unconventional phrasing, the way you describe a project that reveals how you actually think about problems: those are the features, not the bugs. AI can help you clean up grammar, tighten structure, and catch formatting errors. But the moment it starts replacing your words with its words, you’ve traded the one thing that makes your application memorable for a coat of paint that looks identical to everyone else’s.

The brand authenticity gap closes when you stop asking “how do I make this sound more professional?” and start asking “does this still sound like me?” Those are different questions, and only one of them leads to interviews.