“Reduced migration time by 30% through automated scripting” tells a hiring manager three things at once: what changed, by how much, and how you did it. The generic alternative on most data migration resumes (“Responsible for migrating data between legacy and cloud systems”) communicates none of those. The difference between these two bullets is the difference between callbacks and silence.

Infrastructure professionals consistently struggle with this translation problem. You know the project was massive. You know you kept production systems online during a terabyte-scale cutover. But the bullet on your resume reads like a job description, and the hiring manager skips it in under two seconds. The fix follows a specific sequence, and each phase builds on the one before it.

Phase 1: The Generic Bullet Graveyard

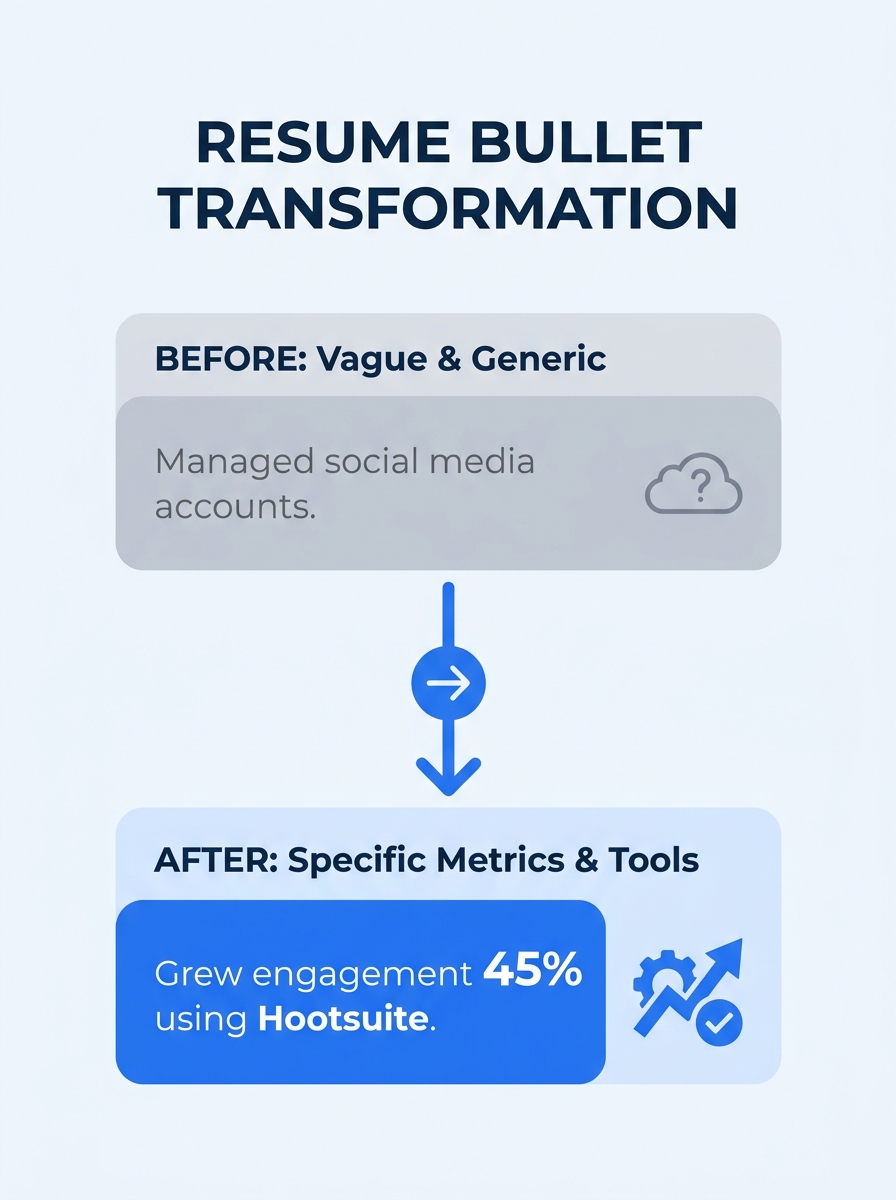

Every data migration resume starts in the same place. You open a blank document (or pull up your old resume) and write what you did in the broadest possible terms. “Managed data migration projects.” “Worked with ETL tools to transfer data.” “Supported infrastructure upgrades.”

These bullets fail for a precise reason: they describe activities, not outcomes. A hiring manager reading “managed data migration projects” learns that you had a job. They don’t learn whether you migrated 50 GB or 50 TB, whether the migration took a weekend or six months, whether anything broke, or whether the project saved money.

TealHQ’s data center migration resume guide puts it directly: use metrics to demonstrate successful project outcomes, such as reduced downtime or improved efficiency. The emphasis falls on outcomes, because outcomes are what hiring managers evaluate when they’re comparing you against 200 other applicants.

If your resume currently has bullets that could apply to any data migration professional at any company, you’re in the generic bullet graveyard. Recognizing that is the first phase of fixing it.

Phase 2: Identifying the Five Metrics Hiring Managers Actually Track

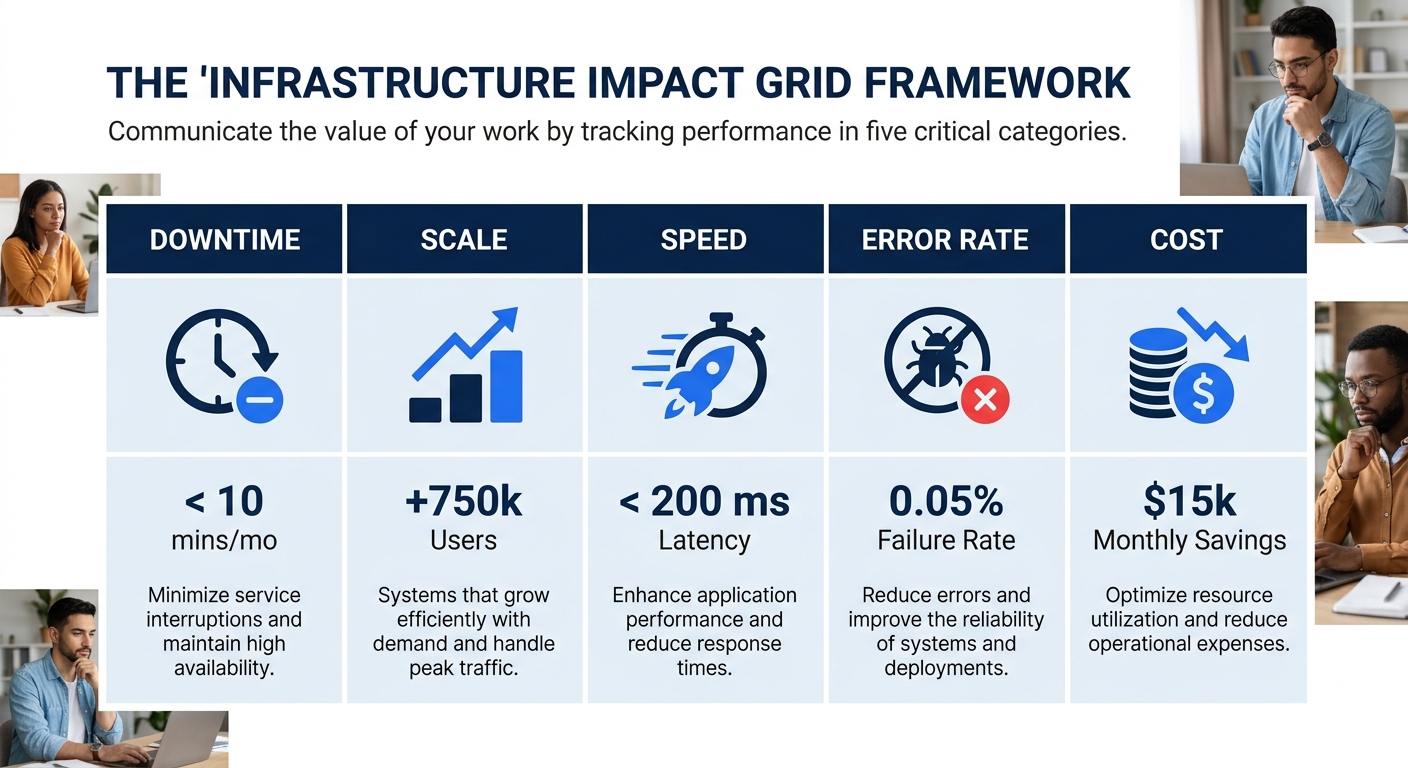

The second phase is figuring out what to measure. Data migration work generates dozens of possible numbers, but hiring managers consistently respond to five categories of technical resume metrics. I call this the Infrastructure Impact Grid:

| Metric Category | What It Measures | Example Bullet Fragment |

|---|---|---|

| Downtime | System availability during/after migration | “Zero unplanned downtime across 500+ server migration” |

| Scale | Volume of data, records, or systems moved | “Migrated 12 TB across 3 source systems to AWS RDS” |

| Speed | Time reduction vs. baseline or estimate | “Completed 3 weeks ahead of projected timeline” |

| Error Rate | Data integrity and validation results | “99.97% record accuracy post-migration, 0.03% fallout rate” |

| Cost | Budget savings or efficiency gains | “$10,000 annual cost reduction through consolidated storage” |

Enhancv’s infrastructure engineering guide reinforces this pattern: add specific metrics like percentage uptime, deployment frequency, or cost savings to each experience bullet point. The key word is specific. “Improved efficiency” isn’t a metric. “Reduced query latency by 40ms after index restructuring” is.

You don’t need all five categories in every bullet. But across your entire experience section, you should hit at least three of them. A resume with zero downtime mentioned, zero cost figures, and zero scale indicators looks like you weren’t paying attention to the business side of your work.

Tip: Dig through old project documentation, Jira tickets, post-mortem reports, and stakeholder emails. The numbers you need for your data migration resume bullets are almost always buried in artifacts you’ve already created. You produced the metrics at work. Now you need to move them onto the page.

Phase 3: When the Numbers Started Changing Everything

Here’s where the transformation gets concrete. Take a real bullet from the generic graveyard: “Developed scripts to support data migration activities.”

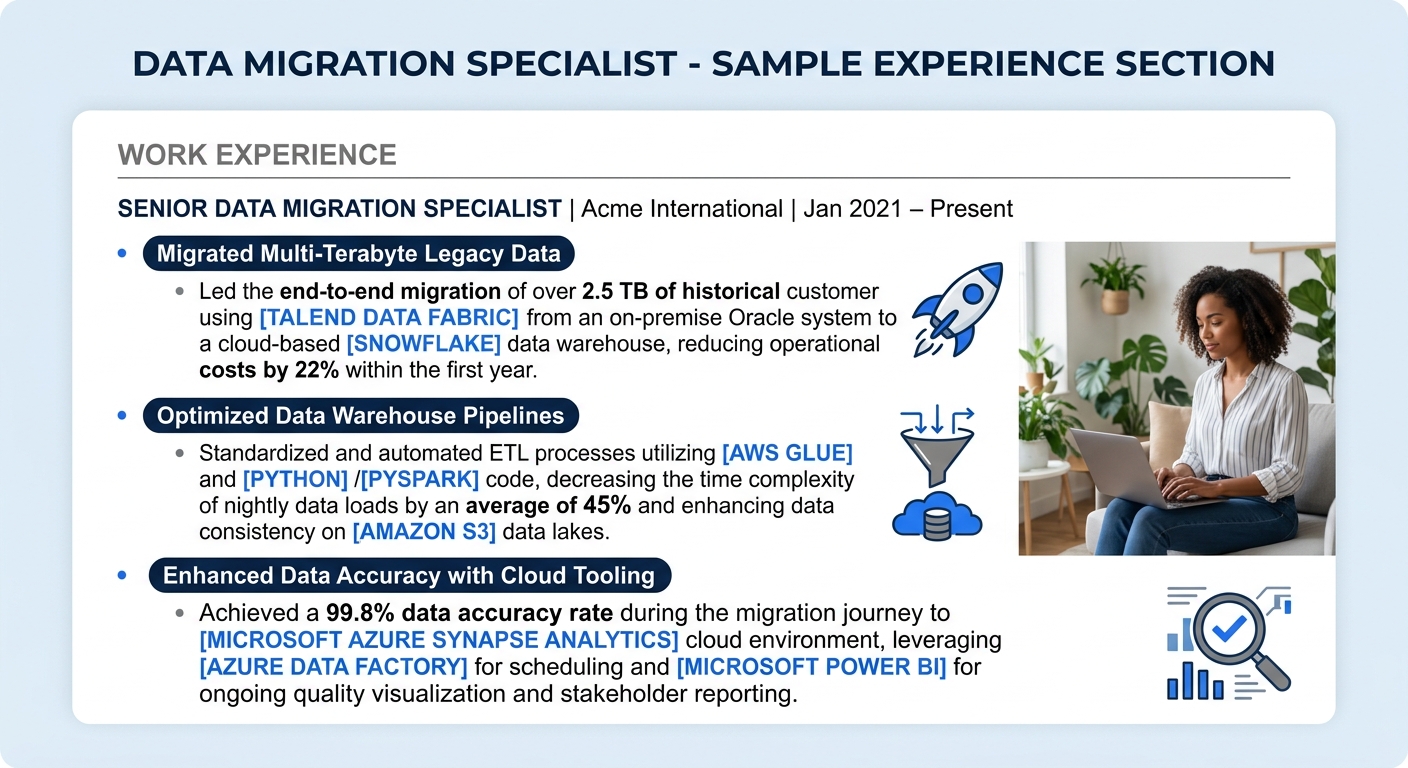

Apply the Infrastructure Impact Grid, and you get something like what LiveCareer’s 2026 data migration specialist guide documents: “Developed automated scripts that reduced migration time by 30%, allowing for quicker project turnaround and increased client satisfaction.”

That single revision changes what a hiring manager understands about you. The 30% figure gives them a benchmark. The word “automated” signals technical depth. The connection to client satisfaction ties your work to business results.

Wozber’s infrastructure project manager guide makes the math explicit: “Managed a $5 million project budget? Achieved a 98% on-time completion rate? Numbers draw attention and lend credibility to your claims, providing tangible evidence of your effectiveness.” The pattern holds whether you’re writing about a $500K migration or a $50M infrastructure overhaul.

For anyone going through the resume repositioning process after a layoff, this phase is where the real competitive advantage emerges. Two candidates with identical experience at the same company will produce wildly different resumes depending on whether they quantified their infrastructure project quantification or left it as narrative prose. The candidate with “migrated 8.2 million customer records with 99.9% integrity” beats “successfully completed customer data migration” every single time.

Phase 4: Translating ETL Jargon for Two Different Audiences

By this phase, you’ve got quantified bullets. The next problem: your resume needs to pass through an ATS keyword filter and make sense to a human hiring manager who may or may not know what Informatica PowerCenter does.

The ETL tools resume language challenge is real. Resume Worded’s 2026 ETL developer guide emphasizes that developers must demonstrate deep understanding of tools like Informatica, Talend, and DataStage, plus proficiency in scripting languages like Python and SQL. TealHQ’s ETL developer guide adds Shell scripting and cloud data services (AWS, Azure, GCP) to the must-list.

So the ATS needs to see those tool names. But the hiring manager needs to understand what you did with them.

The solution is a two-layer approach in each bullet. Lead with the business outcome, then anchor the technical method:

Weak: “Used Informatica PowerCenter for ETL processes in data warehouse project.”

Strong: “Reduced nightly batch processing window from 6 hours to 2.1 hours by redesigning 14 Informatica PowerCenter ETL workflows, eliminating 3 redundant transformation steps.”

The strong version names the tool (Informatica PowerCenter) for the ATS, quantifies the result (6 hours to 2.1 hours) for the hiring manager, and specifies scope (14 workflows, 3 redundant steps) for the technical interviewer. Three audiences, one bullet.

This dual-audience problem shows up across the gap between ATS optimization and hiring manager readability. Your specialization resume writing approach needs to serve both systems, and leading with the number is the most reliable way to do it.

Lead with the business outcome, then anchor the technical method. Three audiences—the ATS, the hiring manager, the technical interviewer—get served by one well-structured bullet.

Phase 5: The Cloud Migration Rewrite

Cloud infrastructure changed what counts as a data migration project. Five years ago, “migrated on-premises database to cloud” was a differentiator. Now it’s table stakes. The bar has moved.

Current data migration resume bullets need to specify which cloud platform, what architecture pattern, and how the migration affected operational costs. “Migrated 4.2 TB Oracle database to AWS Aurora PostgreSQL, reducing monthly compute costs by $3,400 and improving read replica latency from 12ms to 3ms” is the level of detail that stands out in 2026.

Certifications reinforce this specificity. AWS Certified Solutions Architect, Microsoft Certified Azure Solutions Architect Expert, and VMware VCP-DCV all signal that your cloud migration experience has been validated independently. List them in a dedicated certifications section, not buried in your bullet points, and make sure your skills section reflects current platforms rather than ones you haven’t touched in three years.

The resume format itself matters here too. A hybrid structure works best for data migration specialists: a summary section that positions your specialization, a categorized skills block covering ETL frameworks (Informatica, Talend, DataStage), scripting languages (Python, SQL, Shell), and cloud platforms (AWS, Azure, GCP), followed by reverse-chronological experience with quantified bullets.

If your executive summary is currently packed with adjectives like “results-driven” and “detail-oriented,” you’re wasting your most valuable resume real estate. That space should contain your migration scale, your primary technical stack, and your biggest quantified win. Treating your summary as prime space rather than a buzzword dump is one of the highest-ROI changes you can make.

The State of Play

The data migration resume that gets callbacks in the current market looks nothing like the one that worked in 2020. ATS systems are more sophisticated in how they parse and score applications, hiring managers are scanning faster (recruiters now average roughly 11 seconds per resume), and the competition for infrastructure roles has intensified as displaced engineers from major tech layoffs enter the same applicant pools.

What survives this screening gauntlet is specificity. Every bullet on your resume should answer three questions: what did you change, by how much, and what tools or methods did you use? If a bullet doesn’t answer all three, it’s a candidate for revision.

The Infrastructure Impact Grid (Downtime, Scale, Speed, Error Rate, Cost) gives you a diagnostic tool. Print your current resume, highlight every number you find, and map each one to a grid category. If you’ve got zero numbers in two or more categories, those are the gaps to fill first. The metrics exist in your project history. Your job is to surface them, quantify them, and structure them so that a hiring manager who has never configured an ETL pipeline can still understand exactly what you delivered and why it mattered to the business that paid for it.