Recruiters at companies using applicant tracking systems describe a paradox that has sharpened over the past two hiring cycles. The average resume in their pipeline has gotten objectively better: formatting is cleaner, bullet points are tighter, typos have nearly disappeared. And yet, according to a survey cited by The Interview Guys, 67% of HR leaders say AI-generated resumes have actually slowed their hiring process. The resumes look great. They all look the same kind of great, and that uniformity has become the core problem.

The AI resume writing risks here aren’t theoretical. They’re playing out right now in hiring pipelines across every industry, creating a situation where the tool that was supposed to give you an edge is actively eroding it for millions of applicants. Understanding where the line sits between AI as a genuinely useful writing assistant and AI as a homogenizer that strips your resume of everything memorable is the difference between using the technology wisely and letting it work against you.

The Sameness Problem

When you feed your resume through ChatGPT, Claude, or Gemini with a prompt like “make this more professional,” the outputs converge on a recognizable style. Verbs get swapped for stronger-sounding alternatives, professional summaries inflate into confident declarations of impact, and every bullet point settles into the “achieved X by doing Y, resulting in Z% improvement” template. The language becomes polished in a way that strips out personality. As research from Scale.jobs has documented, when recruiters encounter the same templated language across multiple applications, it diminishes the perceived sincerity of every applicant in the pile, often leading to outright dismissal.

This directly threatens your personal brand in AI-generated resumes. The details that once made you distinctive get sanded down into generic corporate-speak because AI tools are trained on thousands of resumes and naturally regress toward the mean. The output sounds competent and anonymous, which is exactly the combination that makes a recruiter move on to the next candidate. We’ve previously explored how AI screening tools actually evaluate resumes, and here’s the irony: many of those same systems are getting better at detecting the AI-generated uniformity that applicants are paying to produce.

The damage extends beyond ATS screening into human review. A survey of job seekers found that 1 in 10 were denied a job when the interviewer discovered they had used ChatGPT to write their application materials. That rejection rate climbs even higher for communications, writing, and marketing roles where the ability to craft original prose is part of the job itself. If your resume reads like every other AI-polished resume in the stack, you’ve already lost the thing that was supposed to set you apart.

Where AI Earns Its Keep

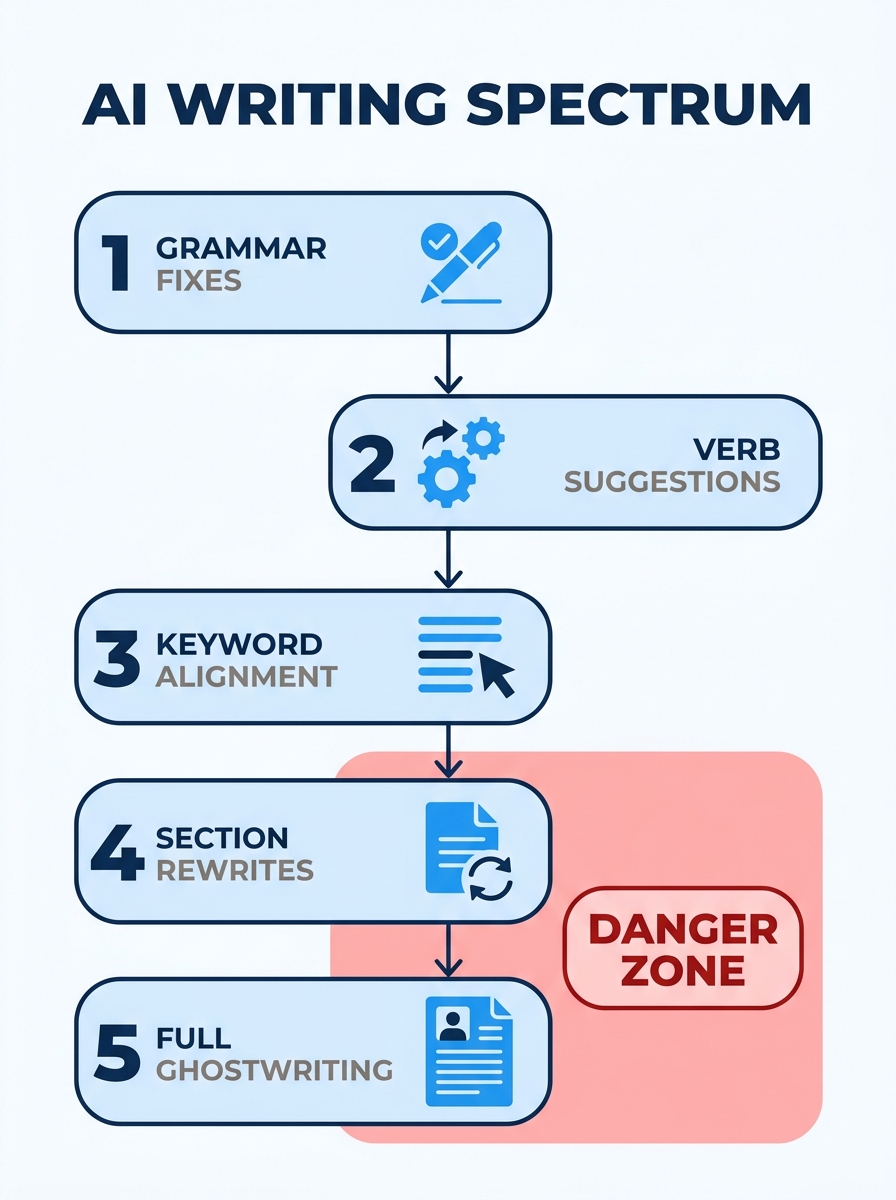

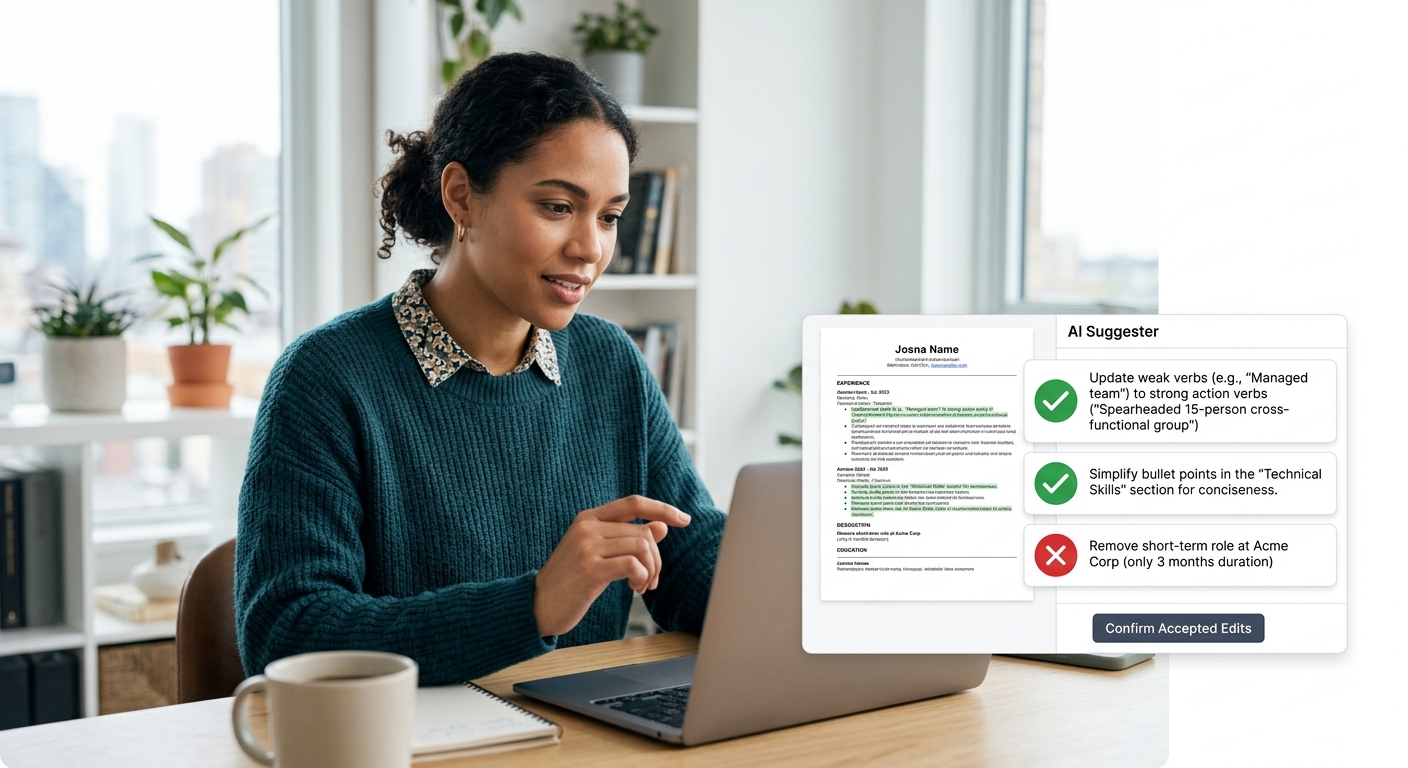

None of this means you should abandon AI tools entirely. The technology is genuinely useful for specific, bounded tasks that don’t require it to know who you are or what makes your career story worth reading. The key is treating AI as an editor with limited scope, not as the author of your professional narrative. And recognizing AI tool limitations upfront keeps you from handing over more control than you should.

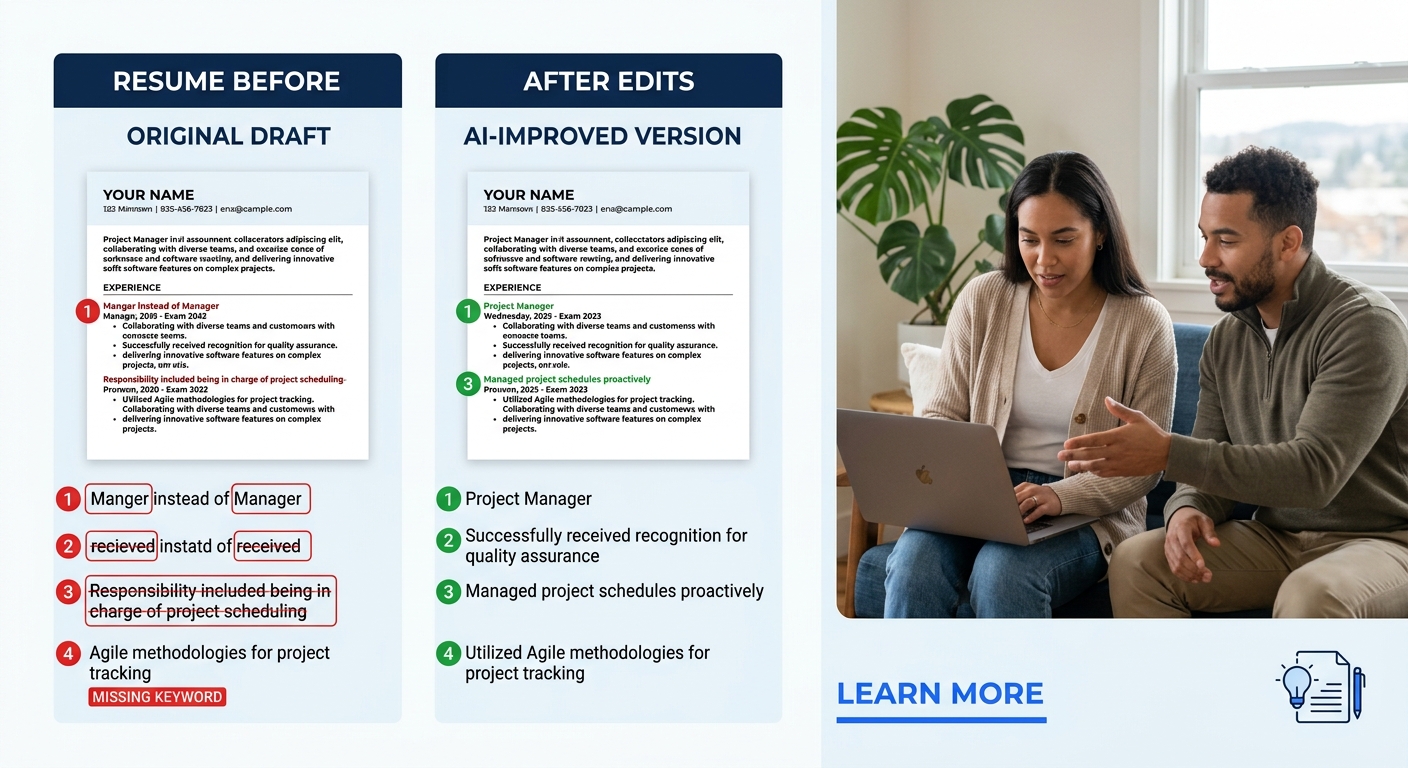

Grammar and clarity improvements are the safest use case. Running your finished resume through a tool that catches awkward phrasing, passive voice, or typos adds value without risking your authenticity. An MIT Sloan study found that error-free resumes can increase hiring likelihood by roughly 8%, and letting AI handle proofreading is a straightforward win. If you struggle with replacing weak, passive language with action-driven phrasing, AI can suggest stronger verb choices for you to evaluate and adopt selectively rather than accepting every suggestion wholesale.

ATS keyword alignment is the second legitimate use case, and it’s the one where understanding when to use AI for resumes matters most. An estimated 88% of resumes aren’t ATS-friendly because they don’t include relevant keywords from the job description, according to data shared across multiple job search communities. AI tools like Jobscan can identify gaps between your resume and a specific posting, flagging terms you should incorporate. But the critical distinction is one that career advisors have made for years: ATS optimization means using the same vocabulary the employer uses to describe skills you genuinely have. It does not mean stuffing your resume with keywords that represent capabilities you can’t demonstrate in an interview. We compared how different AI tools handle this translation work, and the quality varies dramatically between platforms.

ATS optimization means using the same vocabulary the employer uses to describe skills you genuinely have. The moment you start adding keywords for skills you can’t demonstrate, you’ve crossed from optimization into fiction.

Formatting is the third area where AI helps without significant risk. Complex layouts with tables, columns, icons, and graphics routinely break ATS parsing, and AI-powered resume builders can enforce clean, single-column structures that pass through screening systems intact. If you’re unsure whether your current format is causing problems, our breakdown of which resume templates actually pass ATS screening covers the specific design elements to look for and the ones to eliminate.

The Line Between Assistance and Autopilot

The difference between AI that helps and AI that hurts comes down to a single question: after the AI finishes, does the resume still sound like you said it? If you handed it to a former colleague, would they recognize your voice, your specific accomplishments, the particular way you describe your work? Or would they see a polished document that could belong to anyone with a similar job title?

The danger zone begins the moment you ask AI to generate content from scratch rather than refine content you’ve already written. When you paste in a job description and say “write me a resume for this role,” the tool has no choice but to invent plausible-sounding accomplishments drawn from its training data. The result might include claims like “improved system performance by 40%” or “led cross-functional team of 8” that are statistically common resume phrases but bear no relationship to your actual work. This is where AI resume writing risks escalate from “slightly generic” to “actively deceptive,” and it’s a line that more hiring managers are learning to detect. Over-reliance on AI-generated content regularly produces resumes that fail to highlight unique strengths because the AI has no access to what those strengths are.

A better approach treats AI as what Kajabi’s research on personal branding calls “a thinking partner for positioning work.” You write the first draft yourself, drawing on your real experience and genuine accomplishments, including specific numbers even if they’re estimates. Then you use AI to tighten the language, align terminology with the job posting, and catch errors. The final document should contain your stories, your metrics, and your career arc, expressed more clearly than you might have managed alone. The AI sharpens what’s already true, and the work of deciding what’s true remains yours. The question of authenticity vs ATS optimization dissolves once you realize you can score well with automated screening and still sound like a real human wrote about a real career.

This also matters when you get past the resume stage. When your application leads to an interview, how you tell the story behind your resume has to match what the document claims. AI can’t coach you through that conversation, and a resume full of fabricated metrics will fall apart the moment an interviewer asks you to walk them through a specific project. The document and the person behind it need to be telling the same story.

The Question Nobody Wants to Sit With

Here’s what makes this conversation uncomfortable: the better AI tools get at mimicking natural writing, the harder it becomes to draw a clean line between “AI-assisted” and “AI-written.” A resume where AI suggested three stronger verbs and flagged two missing keywords feels meaningfully different from one where AI generated the entire professional summary from a job posting. But both technically involved AI. And as detection tools improve alongside generation tools, candidates face a moving target where the acceptable level of AI involvement keeps shifting based on industry norms, company culture, and the individual preferences of whoever reviews the application.

The 67% of HR leaders who say AI resumes are slowing hiring aren’t objecting to the technology itself. They’re objecting to the flood of generic, interchangeable applications that make it harder to find the candidates who actually fit. That frustration is aimed at candidates who used AI as autopilot, not at candidates who used it as a proofreader. But the hiring manager reading your resume doesn’t know which category you fall into, and the sameness of AI-polished language makes it difficult for them to tell. Your resume has to do that work for you, and it can only do it if the substance underneath the polish is distinctly, specifically yours.

The most reliable test when deciding when to use AI for resumes is to measure the output against a simple standard: can you explain every claim on the page, in detail, during a 30-minute interview? Can you tell the story behind every metric, name the tools you listed, describe the teams you mentioned? If yes, the AI helped you communicate your experience more effectively. If no, it helped you construct a fiction that will collapse at first contact. The technology has no opinion about which outcome you choose, and that choice belongs entirely to you.