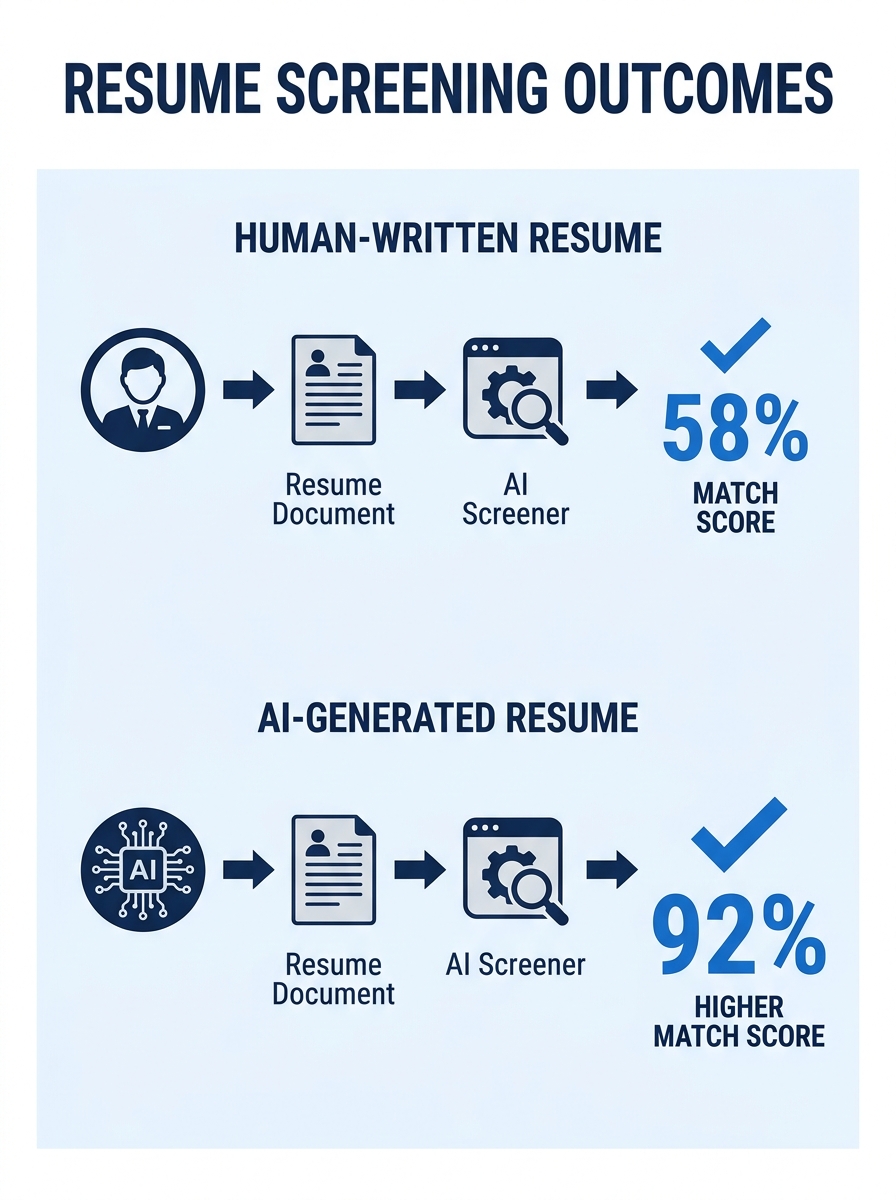

Large language models prefer resumes that were written by large language models. A 2026 empirical study across 2,245 resumes and 24 occupations confirmed what hiring critics had suspected: when an AI system screens applications, candidates who used that same AI to write their resume gain a measurable advantage over equally qualified applicants who wrote their own.

The study, conducted by researchers Xu, Li, and Jiang, found that self-preference bias ranged from 67% to 82% across major commercial models including GPT-4o, LLaMA 3.3-70B, and Qwen 2.5-72B. In simulated hiring pipelines, candidates using the same LLM as the evaluator were 23% to 60% more likely to be shortlisted. The bias was strongest in business-related fields like sales and accounting, where stylistic alignment with corporate language conventions amplified the evaluator’s preference.

This is AI-generated resume bias in its most literal form: algorithms preferring content that sounds like them. And it creates a genuinely uncomfortable situation for anyone trying to figure out whether to write their own resume, pay a professional, or hand the whole thing to ChatGPT.

How Self-Preferencing Actually Works

The mechanism behind this ATS algorithm preference is surprisingly intuitive once you see it. LLMs don’t consciously “recognize” their own output the way a person might recognize their handwriting. Instead, they assign higher relevance scores to text that follows the statistical patterns they were trained to produce. When a resume uses the same syntactic structures, word-frequency distributions, and phrasing conventions that the screening model would generate, it scores higher on internal quality metrics.

Think of it like a teacher who unconsciously gives better grades to essays that mirror their own writing style. The teacher isn’t trying to be biased. They’ve just internalized a particular definition of “good writing,” and anything that matches it feels more polished, more professional, more right.

This creates a feedback loop in algorithmic resume screening. As more candidates use AI tools to write their resumes (and roughly 50% now do, depending on which survey you trust), the training data for future screening models skews further toward AI-generated text. The baseline for what a “normal” resume looks like shifts. Human-written resumes, with their idiosyncratic phrasing and varied sentence structures, gradually start looking like outliers.

The Demographic Bias Layer Underneath

Self-preferencing would be concerning enough on its own, but it sits on top of a well-documented layer of racial and gender bias in AI screening systems. Research from the University of Washington found significant racial, gender, and intersectional bias in how three major LLMs ranked resumes, with models consistently favoring white-associated names. A separate simulation from Brookings confirmed that LLMs caused significant discrimination against Black men in resume screening contexts.

So the picture that emerges is layered. AI screening tools can penalize you for your name, your gender associations, and now, for whether you used the “right” AI tool to write your resume in the first place. These biases compound. A candidate who writes their own resume and happens to have a name associated with a minority group faces two headwinds simultaneously.

Algorithms preferring content that sounds like them creates a genuinely uncomfortable situation for anyone trying to figure out whether to write their own resume or hand the whole thing to ChatGPT.

If your instinct right now is to throw up your hands and conclude that the whole system is broken, that’s reasonable. But the practical reality is that algorithmic screening isn’t going away. Employers receive hundreds of applications per posting, and automated filtering is the only way most hiring teams can manage the volume. The question becomes: how do you write a resume that performs well in this environment without losing what makes you a distinctive candidate?

The Resume Authenticity vs. Optimization Tradeoff

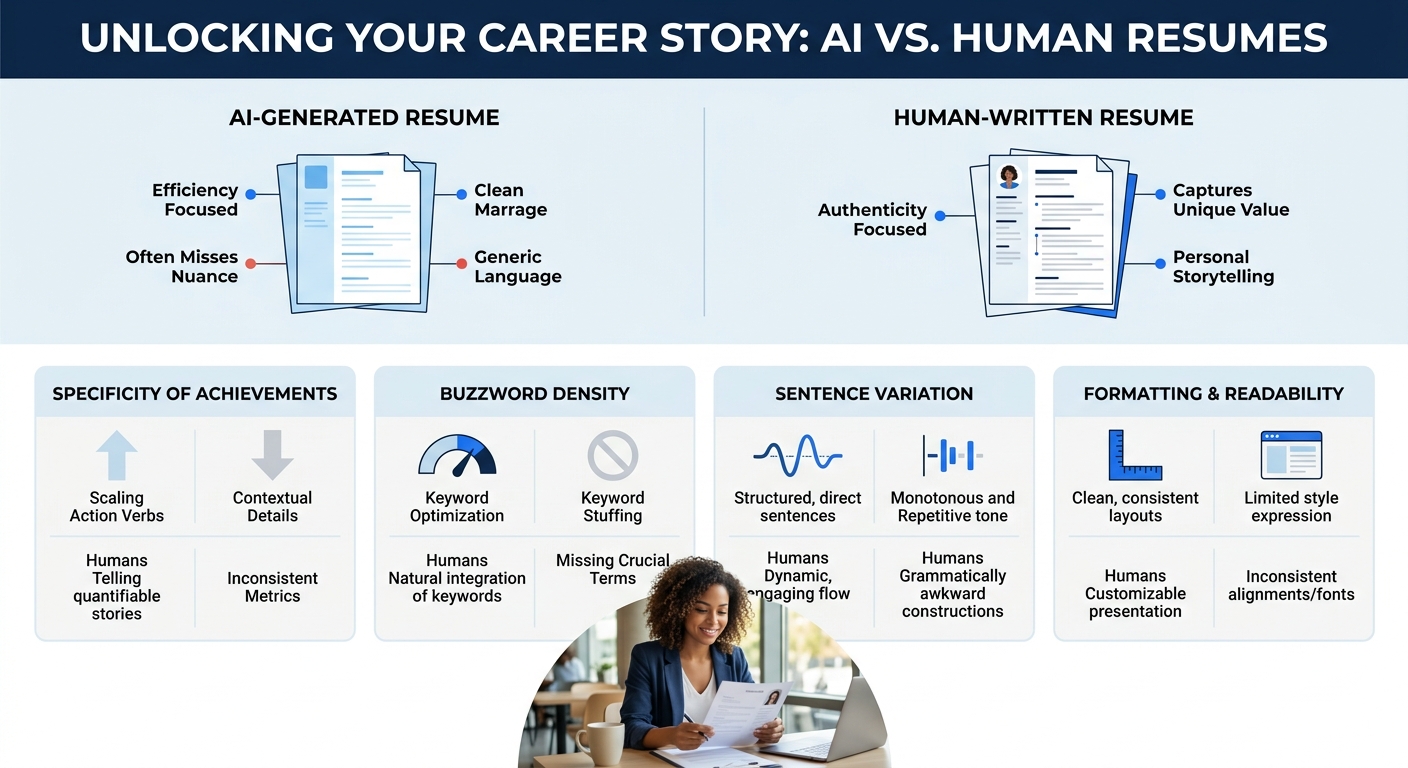

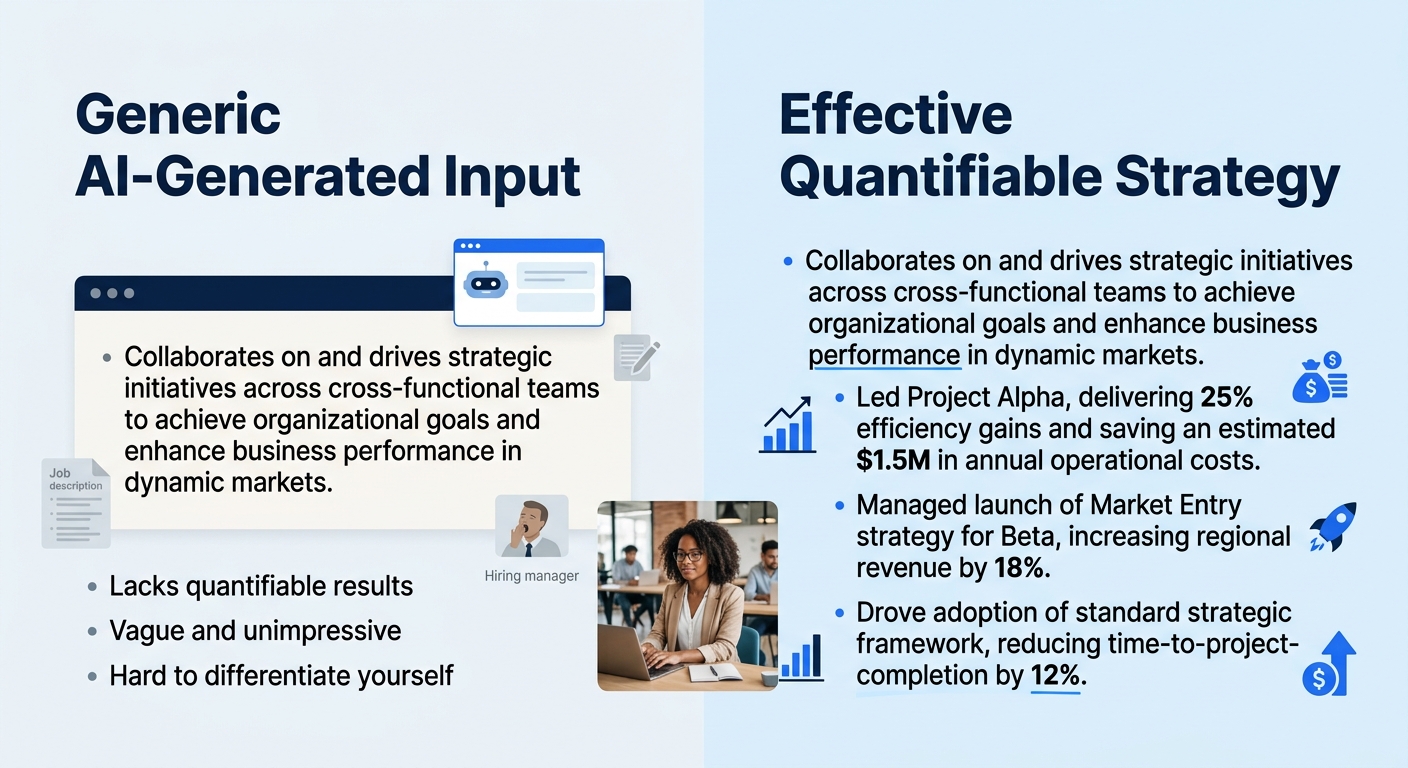

Here’s where the tension between resume authenticity vs. optimization gets concrete. AI-generated resumes tend to share recognizable traits: flawless grammar, generic buzzword density, and sentences that sound polished but say surprisingly little on close reading. As hiring platform Willo documented, you can read an AI-generated resume paragraph twice and still not understand what the candidate actually did. The grammar is perfect. The meaning is hollow.

Recruiters are catching on. We’ve covered how the flood of AI-polished resumes is pushing recruiters back toward personal branding signals that can’t be faked, and the gap between your AI-polished document and the person who shows up for the interview is a topic we’ve explored in depth when discussing the authenticity problem in AI-rewritten resumes. The screening algorithm might love your resume. The human interviewer who meets you fifteen minutes later might wonder who actually wrote it.

This is the paradox playing out in real time: optimizing purely for algorithmic screening can hurt you at the human evaluation stage, and writing purely for human readers can get you filtered out before a person ever sees your name.

A Realistic Strategy for Competing on Both Fronts

The good news is that the Xu, Li, and Jiang research also tested interventions that reduced self-preferencing bias significantly. Modified system prompts that instructed evaluator models to ignore the origin of a resume reduced bias by 17% to 63% across models. Ensemble evaluation using multiple models cut self-preference by more than 50%. These are changes happening on the employer side, and they’ll take time to roll out broadly.

On your side, here’s what works right now:

Use AI as an editor, not a ghostwriter. Write the first draft yourself, rooted in your actual accomplishments and specific outcomes. Then use AI tools to check keyword alignment with the job description and clean up awkward phrasing. This gives you the stylistic signals that screening algorithms respond to while preserving the specificity that human reviewers look for. We’ve dug into when AI rewriting helps and when it backfires if you want a more detailed framework for this.

Anchor every bullet in a measurable outcome. AI-generated bullets tend toward vague competency language (“managed cross-functional teams to drive results”). Human-written bullets at their best include specific numbers, project names, or before-and-after comparisons. Replacing weak verb-first bullets with outcome-driven language that passes both ATS and human review is the single highest-return edit most candidates can make.

Format for parsability, not beauty. Poorly formatted resumes confuse parsing algorithms, causing them to misread information or miss critical details entirely. Standard section headings (“Work Experience,” “Skills,” “Education”), single-column layouts, and common file formats (.docx, .pdf with selectable text) ensure the algorithm reads what you intended it to read. The sweet spot is a clean format with strategic keyword placement, not a graphic-heavy design portfolio and not a keyword-stuffed wall of text.

Tip: Before submitting, paste your resume into a plain-text editor. If the content becomes unreadable or the sections scramble, an ATS parser will struggle with it too. Fix the formatting before worrying about word choice.

Don’t chase one model’s preferences. Since self-preferencing varies by model, and you rarely know which LLM a given employer’s ATS uses, writing for a single tool’s style is a losing bet. Writing clearly, specifically, and with genuine detail about what you accomplished is the approach most resilient to model variation. Generic optimization breaks when the target changes. Specificity works everywhere.

Prepare for the interview to match the resume. The growing use of AI across the entire hiring process, from resume writing to live interview responses, means employers are increasingly testing for consistency between what’s on paper and what a candidate can articulate in person. If your resume claims you “reduced deployment time by 40% through containerization pipeline redesign,” you should be able to explain what was slow, what you changed, and why it mattered, without reaching for buzzwords.

For anyone navigating this landscape, the resources at ResumeWriting.net can help you find the balance between optimization and authenticity that this moment demands.

The Questions That Haven’t Been Answered

The self-preferencing research is still new, and several critical questions remain open. The biggest one: how widespread is LLM-based screening in actual hiring pipelines today? The Xu, Li, and Jiang study used simulated pipelines. Real-world adoption data is thin. Some employers are using LLMs directly for initial screening. Many still rely on older keyword-matching ATS systems that don’t exhibit self-preferencing behavior because they aren’t generative models. The gap between “this could happen” and “this is happening to you right now” matters for deciding how much to adjust your approach.

There’s also no consensus on regulation. The World Economic Forum has noted that algorithms can help mask demographic information and reduce certain types of bias, but no widely adopted standard exists for auditing AI screening tools for self-preferencing specifically. The auditing frameworks that do exist, like adverse impact testing, were designed for demographic bias and don’t capture style-based advantage at all.

And the competitive dynamics keep shifting. As AI-written resumes become the norm, the signal that screening models extract from stylistic polish will degrade. When every resume sounds the same, the differentiator moves elsewhere: to referrals, portfolios, interview performance, and the specificity of your accomplishments. The paradox may resolve itself over time, but the transition period is where real candidates are losing real opportunities to algorithmic quirks they can’t see and can’t control. Understanding the mechanics gives you the best shot at navigating a system that wasn’t designed with fairness to individuals in mind.