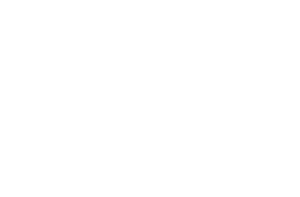

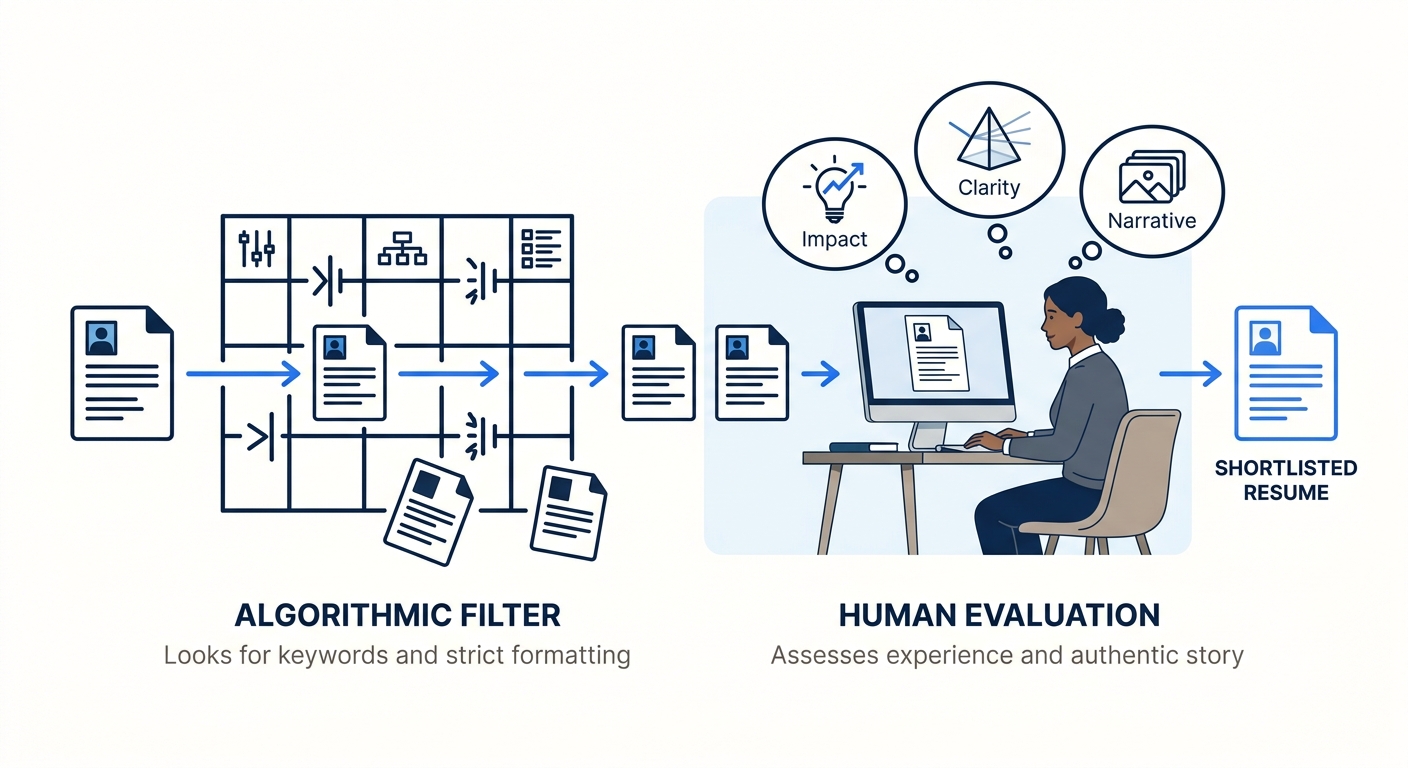

The resume that lands on a hiring manager’s screen has already been translated twice before a human eye touches it. First, the applicant tracking system parsed it, stripping your layout, extracting keywords, and mapping your experience to database fields. Then a recruiter glanced at the parsed output, decided it matched enough criteria, and forwarded a text-only version to the person who’ll actually decide whether to call you. By the time that hiring manager reads your work history, the document bears little resemblance to what you submitted. This creates a problem the resume optimization industry doesn’t address well: the tactics that maximize your ATS match score actively degrade the reading experience for the human who makes the hiring call.

The Two Audiences Want Opposite Things

ATS platforms evaluate your resume through keyword density and structural compliance. They want standardized section headers like “Work Experience” and “Education.” They want clean .docx files with single-column layouts. They want exact-match phrases pulled from the job description. As UIC’s career services office notes in their ATS optimization guide, even basic formatting choices like headers, footers, borders, and symbols can prevent a system from reading your content at all. So you strip everything down, remove design elements, stuff your skills section with terms from the posting, and watch your parsed score climb.

The hiring manager who reads the result has a completely different evaluation framework. They’re scanning for clarity, impact, and evidence of judgment. Recruiters now spend roughly eleven seconds per resume before deciding whether to continue reading, and in that window, they’re not counting keyword matches. They’re looking for sentences that communicate what you actually accomplished and why it mattered. A bullet point that reads “Executed cross-functional stakeholder alignment initiatives leveraging agile methodology frameworks” hits several keyword targets simultaneously while communicating almost nothing to a human being. The hiring manager doesn’t see your ATS score. They see a paragraph of buzzwords and move on.

This dual-audience problem is structural. The ATS rewards specificity of terminology: exact phrases, industry acronyms written out with their abbreviations in parentheses, technical skill names repeated across multiple sections. Human readers punish repetition. They find it tedious and interpret it as padding. So the document that earns a 90+ match score and the document that makes a hiring manager want to pick up the phone are frequently working against each other, and most resume advice treats them as though they’re the same challenge.

Where Keyword Strategy Becomes Keyword Noise

The conventional wisdom around resume readability vs. keyword matching goes something like this: include the right keywords, but do it naturally. That advice sounds reasonable until you try to follow it. A typical job posting for a mid-level marketing role might contain 40 to 60 distinct terms and phrases that an ATS could be configured to search for. Fitting all of them into a two-page document “naturally” is a writing challenge that would give a professional copywriter trouble, and most job seekers aren’t professional copywriters.

What happens in practice is predictable. People front-load their skills sections with long lists of terms, repeat the same phrases in multiple bullet points, and add a keyword-rich summary paragraph at the top that reads like a word cloud arranged into sentences. The resume passes the automated screen. Then it arrives on a desk where a human being tries to figure out what this person actually did at their last three jobs, and the signal is buried under so much optimization noise that the real accomplishments don’t land. We’ve written before about how AI-enhanced bullet points can fail both systems simultaneously, and the root cause is usually this same tension between density and clarity.

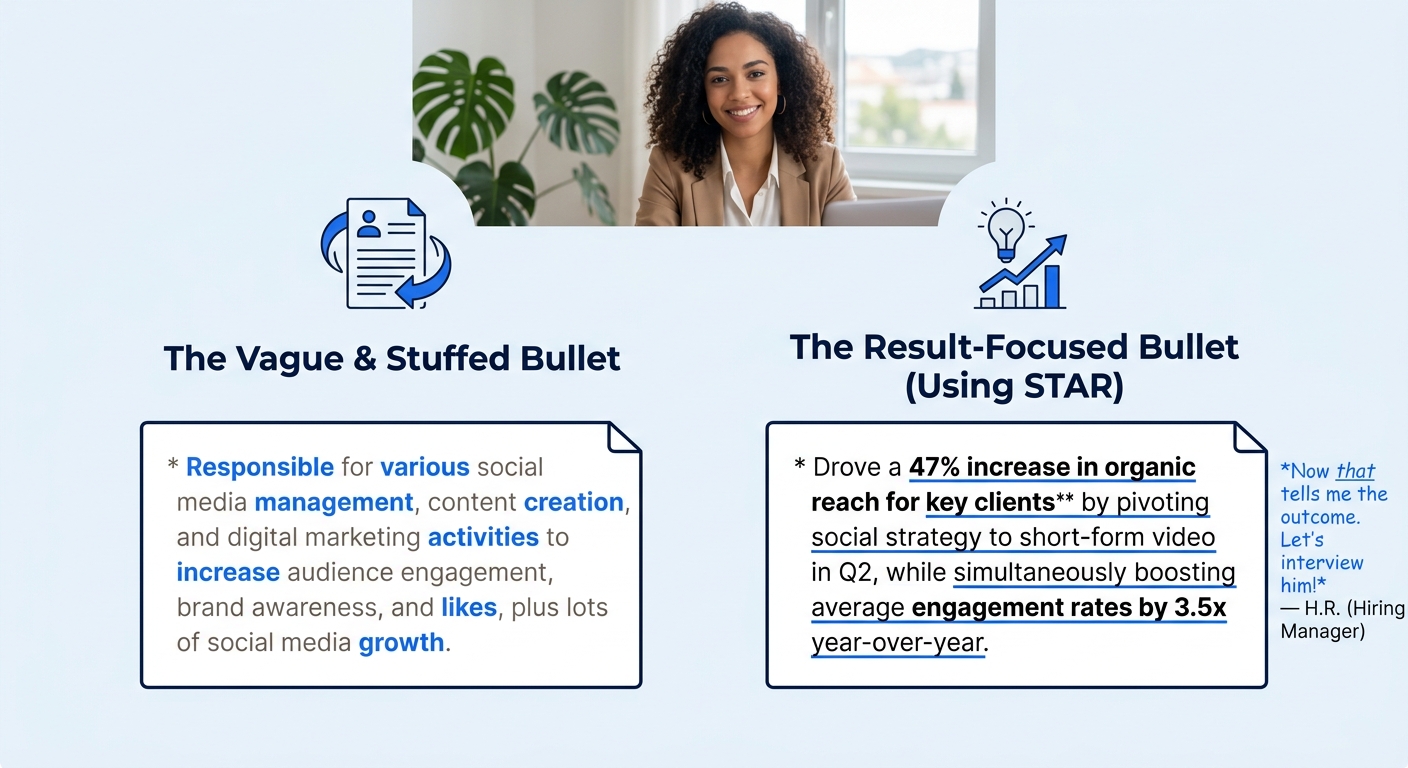

The practical middle ground requires accepting that you can’t optimize for every keyword in the posting. Pick the 10 to 15 terms most central to the role, integrate them into your experience bullets with genuine context and measurable outcomes, and let the less critical terms go. A bullet like “Reduced customer onboarding time by 34% by redesigning the self-service portal workflow” hits keywords (onboarding, portal, workflow) while giving the hiring manager something concrete to evaluate. A bullet like “Managed onboarding processes and portal optimization workflows for customer-facing self-service platforms” hits more keywords and says less. Your ATS score for the second version might be three points higher. Your odds of getting a phone call from the person reading it are meaningfully lower.

Nancy Light, a career coach who writes extensively about keyword strategy, frames the priority clearly: your resume must still be readable and engaging for humans. The ATS is a gatekeeper, but the hiring manager is the decision maker. If you optimize so aggressively for the gate that you bore the person standing behind it, you’ve solved the wrong problem. And this trade-off applies to formatting decisions too. One designer on Reddit’s UX community shared that they built a resume in Figma with thoughtful font hierarchy and subtle color variation, and it still scored 99% compatible with ATS scanners. The lesson: clean visual design and machine readability can coexist, but only when you understand which specific design choices cause parsing failures (tables, text boxes, multi-column layouts) and which ones are safe (font variation, hierarchy, strategic use of white space).

The ATS is a gatekeeper, but the hiring manager is the decision maker. If you optimize so aggressively for the gate that you bore the person standing behind it, you’ve solved the wrong problem.

Mapping your genuine skills into bullet points that translate for human readers is a different discipline than keyword-matching against a job description. Both matter. But the second skill is the one that actually gets you hired, and it’s the one that optimization tools can’t score.

The Bias Layer Underneath the Optimization

There’s a dimension to ATS optimization trade-offs that goes beyond formatting and keyword counts. The algorithmic systems making initial screening decisions carry biases that your optimization strategy can’t fully account for, and hiring managers on the other end of the pipeline carry their own set of biases that interact with the ones baked into the software. Research from the Brookings Institution has documented how language model-based resume screening introduces gender, race, and intersectional bias through the way these systems calculate similarity between resume text and job descriptions. The cosine similarity scores that determine whether your resume surfaces at all are shaped by training data that reflects existing hiring patterns, including discriminatory ones.

What this means practically is that two candidates with identical qualifications can receive different algorithmic rankings based on linguistic patterns associated with their demographic backgrounds. We’ve covered how AI resume tools rate identical content differently based on perceived gender, and the implications for your optimization strategy are uncomfortable. You can follow every ATS formatting rule, hit every keyword, and still get filtered because the system’s understanding of what a “good” resume looks like was trained on historically biased data. The algorithmic bias in resume tools creates a floor of unfairness that individual optimization can’t fully overcome.

Hiring manager screening introduces its own layer of bias, of course, but at least a human reader can respond to a compelling narrative, recognize an unconventional career path as an asset, or appreciate a writing voice that stands out from the stack. The machine can’t do any of that. It matches patterns. And when those patterns carry embedded discrimination, the candidates who most need to communicate their unique value through their writing are the same ones most likely to be filtered before a human ever reads what they wrote. This is a tension the new AI-driven resume screening paradigm has amplified rather than resolved.

Where I Remain Unsure

The honest answer to the ATS-to-hiring-manager translation gap is that no single document can perfectly serve both audiences, and the industry’s insistence that you can achieve a “perfect score” while writing naturally for humans papers over a real contradiction. You are writing for a pattern-matching algorithm and a tired human being who reads dozens of resumes in a sitting, and these two readers want different things from your text. The best you can do is triage: satisfy the ATS’s minimum requirements for parsing and keyword relevance, then spend the remaining effort making your bullets clear, specific, and grounded in outcomes that a person can evaluate in under fifteen seconds.

What bothers me about the current state of resume advice is how much of it treats the ATS as the harder problem. Tools like Jobscan and similar platforms have built entire businesses around scoring your keyword match rate, and that score feels objective in a way that “will a hiring manager find this compelling?” does not. But the data on how rapidly recruiters filter candidates suggests that the human-readability problem is at least as consequential. Getting past the ATS means your resume enters the stack. Getting past the human means you get an interview. These are different challenges, and overinvesting in the first at the expense of the second is a mistake I see constantly, especially among candidates who’ve been job-searching for a while and have internalized the idea that the algorithm is the primary obstacle.

The unsatisfying truth is that you’ll need to make trade-offs, and nobody can tell you exactly where to draw the line because the answer depends on the specific ATS platform the company uses, the specific hiring manager’s reading preferences, and the competitive field for the particular role. Strip your formatting enough to parse cleanly. Hit the core keywords with enough frequency to rank. Then write bullet points that a real person would want to read, the kind of measurable, specific accomplishments that give hiring managers something to grab onto in those few seconds of attention. You’re writing a document that has to survive a machine translation and then persuade a human, and the tension between those two goals isn’t going away. Learning to manage that tension honestly, rather than pretending a high match score solves everything, is the actual skill that separates candidates who get interviews from candidates who keep optimizing in the dark.